Google Analytics and Adobe Analytics were built for an era where every click was a human, every session was a person, and cookies could be trusted to tell you who was who. That era is over.

And yet, traditional analytics dashboards look the same. The reports still generate. The numbers still go up and to the right. The fog isn't that you can't see anything. It's that you can't trust what you're seeing.

"The fog isn't that you can't see anything. It's that you can't trust what you're seeing."

One major media company we spoke to recently described their analytics situation with a single word: fog. Traffic was up. They could see sessions, pageviews, engagement ticking steadily upward. But what they couldn't see was who, or what, was actually on their site.

The KPI Massacre: What's Breaking

Let's be specific about what's breaking in your analytics:

Unique Visitors: Inflated 20-30%

Fraud0's "Unmasking the Shadows 2025" report found that roughly one in five reported visits on typical publisher sites aren't human at all. Your audience size is inflated—by how much?

Engagement Metrics: Corrupted in Both Directions

Crawl-style bots create phantom "power users." These are sessions with near-zero bounce rates, abnormally long durations, and hundreds of pageviews. They look like your most engaged readers, but they're not reading anything. Hit-and-run bots do the opposite: zero-second sessions, 100% bounce rates, dragging your averages down. So your actual human engagement is being masked by noise at both ends of the spectrum.

Ad Impressions: ~15% Served to Machines

Fraud0's analysis found 14.9% of ad impressions are delivered to bots—8.7% confirmed, 6.2% suspected. Those impressions cost money, yet they generate zero value. And fraudulent clicks convert at roughly half the rate of legitimate ones, so even your click-through rates can't be trusted.

Attribution: Completely Broken

When an AI agent scans your article to answer a human's question—a human who never actually visits your site in their browser—your ad attribution records... what, exactly? An impression? A session? Your content was consumed, but no human eyes saw your page, no ads were viewable, and your attribution model thinks you delivered value you can't monetize.

All of these issues combined represent a strategic crisis that is getting progressively worse. Every decision you make on these numbers—ad pricing, content strategy, product development, hiring—is now built on compromised foundations.

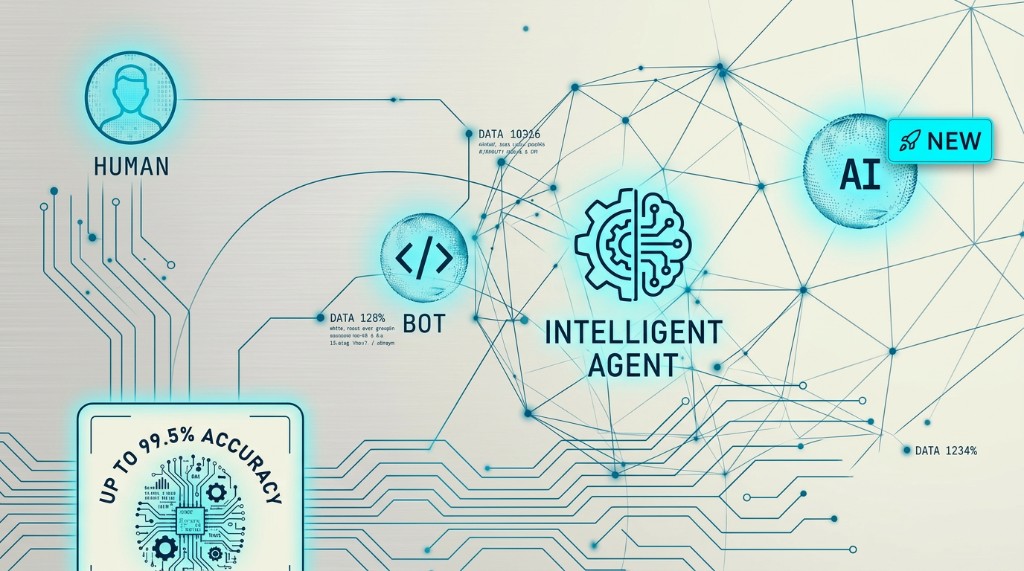

Not All Machines Are Equal

Here's where things get complicated. Not every bot is bad. Not every machine is malicious. And the spectrum of non-human traffic now visiting your site requires a more sophisticated response than simply "block or allow."

1. Legitimate Crawlers

Googlebot alone accounts for 4.5% of all HTML request traffic. That's more than all AI bots combined. These are the crawlers you want. They index your content, surface it in search results, and drive organic traffic. Blocking them would be self-sabotage.

2. Unauthorized Scrapers

Next, you have unauthorized scrapers. These AI crawlers systematically harvest content to train models or power AI services, often without permission or compensation. This is the traffic you might want to block, monetize through licensing deals, or at minimum, understand.

3. Agentic Browsers

This is the new category, and the most disruptive. Tools like Perplexity Comet, ChatGPT Atlas, and Google's Gemini-in-Chrome don't just crawl. They browse. They act on behalf of humans. They log in, navigate, compare, and complete tasks.

According to HUMAN Security, agentic traffic grew 1,300% between January and August 2025. Then it jumped another 131% month-over-month from August to September as commercial tools rolled out.

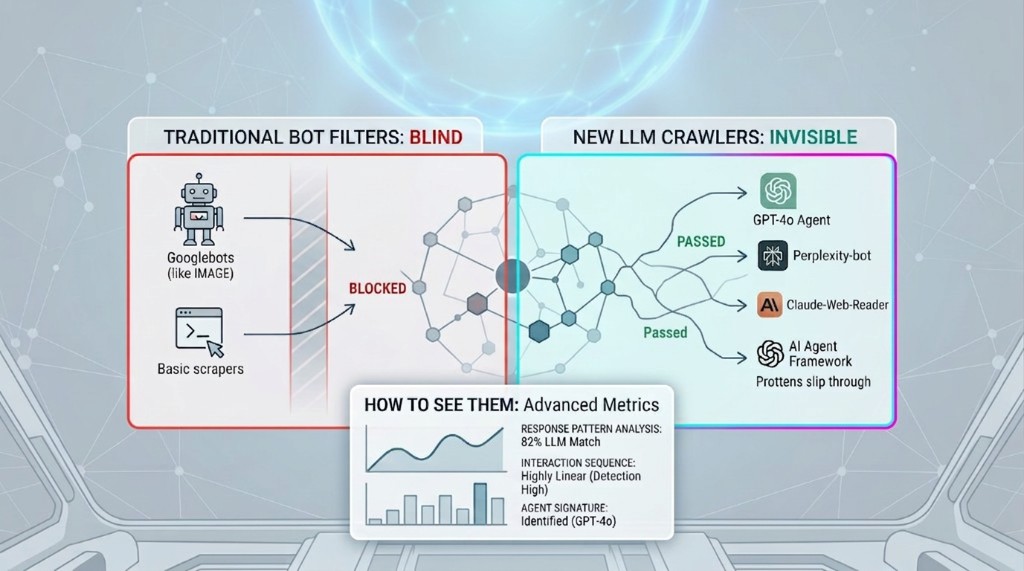

The Agentic Blind Spot

While legacy bots inflate your metrics, agentic browsers don't show up at all. They fetch HTML and APIs directly, without executing scripts. No script execution, no pageview recorded. A real human delegated a real task to an AI agent. It visited your site, browsed your content, maybe even influenced a subscription. Your analytics recorded nothing.

"The more legitimate the bot, the more invisible it becomes."

Why Media Gets Hit Hardest

If you're in media and entertainment, you're facing a double hit that other industries don't experience.

The Zero-Click Problem

Referral traffic from search is already under pressure. But agentic browsers add a new layer: AI that visits your site on behalf of a user who never sees it. As one publishing executive told Digiday: "If the browser is disintermediating us—that's a whole new level of disintermediation."

7x More Likely to See AI Bot Traffic

Arc XP's analysis found that media sites are 7x more likely to see AI bot traffic than average websites. That's not because bots find your content interesting. It's because your content is valuable, especially for training models, generating answers, and summarizing news.

Your content is the raw material for AI training. And your content is the destination for AI-generated answers. You lose on the way in, and you lose on the way out.

The Practical Damage

Consider this scenario: Your "engaged readers" metric is up 40%, but subscription conversions are flat. The editorial team is celebrating. The revenue team is confused. The reality? A significant portion of that "engagement" is answer-fetching agents scraping your content to power AI responses elsewhere.

The Framework: Detect → Analyze → React

The good news: this problem is solvable. But it requires a fundamental shift in how you think about measurement. You can't filter what you can't see. And you can't see agentic traffic with client-side JavaScript tags alone.

1. DETECT

Agentic browsers don't execute JavaScript. If your analytics depend on a script firing in a human-operated browser, that traffic simply never appears. So the first step is to augment your data collection.

- Server-side tracking that captures HTTP requests regardless of script execution

- Behavioral fingerprinting that looks beyond user-agent strings

- ML models trained on your actual traffic patterns to identify anomalies

A practical starting point is to compare what your web servers actually received against what your analytics platform recorded. The delta is your blind spot.

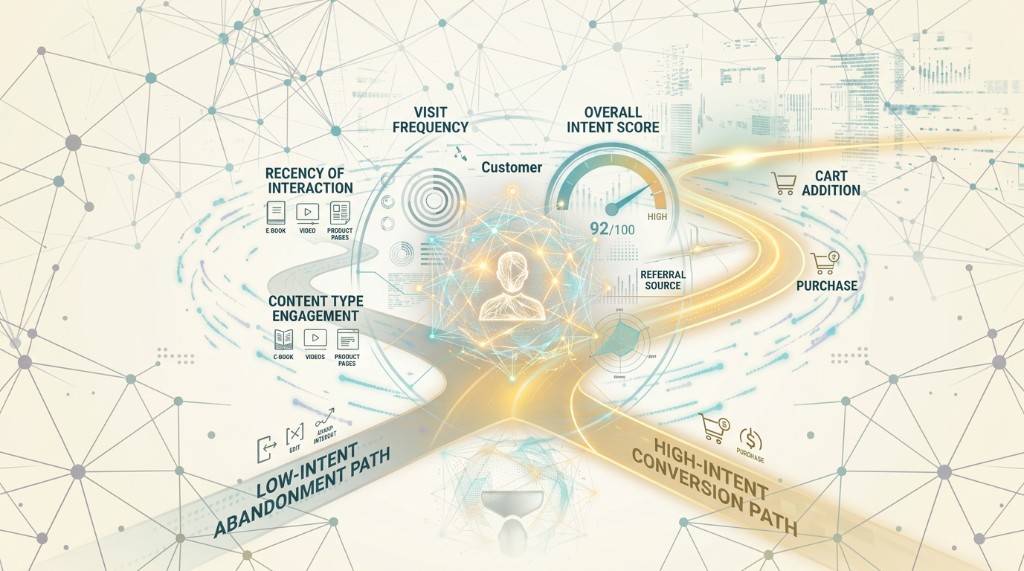

2. ANALYZE

Once you can see the traffic, you need to segment it properly. Four buckets minimum:

- Humans — Your actual audience

- Legitimate bots — Search crawlers, authorized tools

- Unauthorized scrapers — Training data collection, content theft

- Agentic browsers — AI acting on behalf of humans

Each requires different treatment. Each affects your metrics differently. And you need parallel KPI views: human-only metrics for revenue decisions, full-traffic metrics for infrastructure planning and content strategy.

3. REACT

The conversation in the industry has been largely defensive—block the bots, filter the traffic, protect the metrics. But that's only half the story.

Think about it: if an AI agent is acting on behalf of a genuine customer with genuine subscription or engagement intent, blocking it means blocking the sale. Successful publishers in 2026 won't be the ones who keep agents out. They'll be the ones who figure out how to serve both audiences—humans who browse visually and agents who parse.

The Opportunity

While the data on agentic browsing is alarming, it isn't all doom and gloom. Buried in the threat is genuine opportunity.

87% of AI Agent Visits Are Product-Related

HUMAN Security found that 87% of AI agent page visits are product-related. That's not random crawling. That's commercial intent—real customers delegating real purchase research to AI assistants.

The trajectory is clear: agentic traffic grew 1,300% in the first eight months of 2025, then jumped another 131% month-over-month as commercial tools rolled out. By mid-2025, 1 in 50 publisher visitors was an AI agent, up from 1 in 200 at the start of the year.

Gartner predicts traditional search volume will drop 25% by 2026. The front door to the web is changing. Companies that can see and serve agentic traffic will capture the value. The rest will be invisible.

What Success Looks Like

The companies that get this right won't just survive the agentic era. They'll use it as a competitive advantage. They'll:

- Know which agents are driving revenue

- Optimize experiences for both humans and machines

- Negotiate licensing deals from a position of knowledge, not ignorance

- Build detection capabilities that evolve with the threat

What Modern Measurement Looks Like

The industry is shifting toward server-side measurement. The IAB Tech Lab launched its Trusted Server framework in March 2025, signaling that the browser-dependent era is ending. But frameworks are just frameworks. Implementation is what matters.

Why Legacy Analytics Can't Catch Up

Across media customers, there is a consistent pattern forming whereby analytics platforms undercount total traffic by 30-50%, while simultaneously overcounting "engaged" humans. Consequently, publishers are making pricing and editorial decisions on compromised data.

It's tempting to assume Google will solve agentic measurement once Chrome gets agentic capabilities. But Google is not a neutral actor. The company operates the world's largest advertising network and the dominant measurement tool. Exposing how much inventory is consumed by non-humans doesn't serve that interest.

The WysLeap Approach

WysLeap's privacy-first analytics platform addresses these challenges directly:

- Server-side collection: Captures requests regardless of whether JavaScript executes, so agentic traffic that bypasses tags still gets recorded

- Behavioral fingerprinting: Uses ML-powered fingerprinting to identify visitors without cookies, detecting anomalies that indicate bot activity

- Real-time detection: Identifies bot patterns, agentic browsers, and suspicious activity as it happens, not days later

- Human-only metrics: Separates human traffic from bots, giving you accurate KPIs for revenue decisions

- Privacy-first: All identification is anonymous and GDPR-compliant by default—no cookies required

The First Step Is Visibility

You can't optimize what you can't see. The companies still waiting for the industry to figure this out will find themselves permanently behind. The stable digital order is over. We're in a disruptive phase with new players and new rules.

Media companies that thrive will be the ones that move quickly through the stages of grief and accept the imperative to adapt.

Ready to See What You're Missing?

WysLeap provides real-time bot detection and human-only analytics, giving you the visibility you need to make accurate business decisions. See the difference between humans and machines, and optimize for both.

Get Started FreeSiva J.P.

Privacy Research Lead at WysLeap